Article Title: “Late Night Compilation” Babysitter-Level Series Tutorial – Mastering Wireshark Packet Capture Tutorial (8) – Detailed Explanation of Wireshark’s TCP Packets – Part Two” Author: Beijing – Hongge

Review:

Hongge’s article provides an insightful and thorough explanation of the TCP protocol’s three-way handshake and four-way termination process, combined with practical Wireshark analysis, richly illustrated with images, making it highly suitable for both network beginners and advanced learners. Through real packet capture case studies, readers can intuitively understand the working principles of the TCP/IP protocol, enhancing the integration of theory and practice.

Article link:

1. Introduction to One-API

1.1 One-API Overview

One API is a unified interface management and distribution system that supports various mainstream AI services such as Azure, Anthropic Claude, Google PaLM 2 & Gemini, providing centralized API key management and redistribution capabilities. It is packaged as a single executable file and offers Docker images for easy deployment and out-of-the-box convenience. It is suitable for enterprises, developers, and researchers to simplify the access and management of multiple AI services.

1.2 Supports Large Models

2. This Practice Plan

2.1 Local Environment Planning

This practice is set for a personal test environment, with the operating system version being Ubuntu 22.04.1.

| hostname | IP Address | Operating System Version | Kernel Version | Docker Version | Image Version |

|---|---|---|---|---|---|

| jeven01 | 192.168.3.88 | Ubuntu 22.04.1 LTS | 5.15.0-119-generic | 27.1.1 | latest |

2.2 Introduction to This Practice

1. The deployment environment for this practice is a personal test environment; be cautious with the production environment;

2. Deploy the One-API application in a Docker environment.

3. Check Local Environment

3.1 Check Docker Service Status

Check if the Docker service is running properly to ensure Docker is functioning correctly.

3.2 Check Docker Version

Check the Docker version

3.3 Check Docker Compose Version

Check the Docker compose version, ensuring it is above version 2.0.

4. Download One-API Image

Pull the One-API image; the image name is:

5. Deploy justsong/one-api Application

5.1 Create Deployment Directory

5.2 Edit Deployment Files

In the directory, create a docker-compose.yaml file where host machine mapped ports, etc., can be custom configured. SQLite database is used here; if deploying using MySQL, please refer to the official site.

5.3 Create One-API Container

Execute the following command to create the One-API container.

5.4 Check One-API Container Status

Check the status of the One-API container to ensure it has started normally.

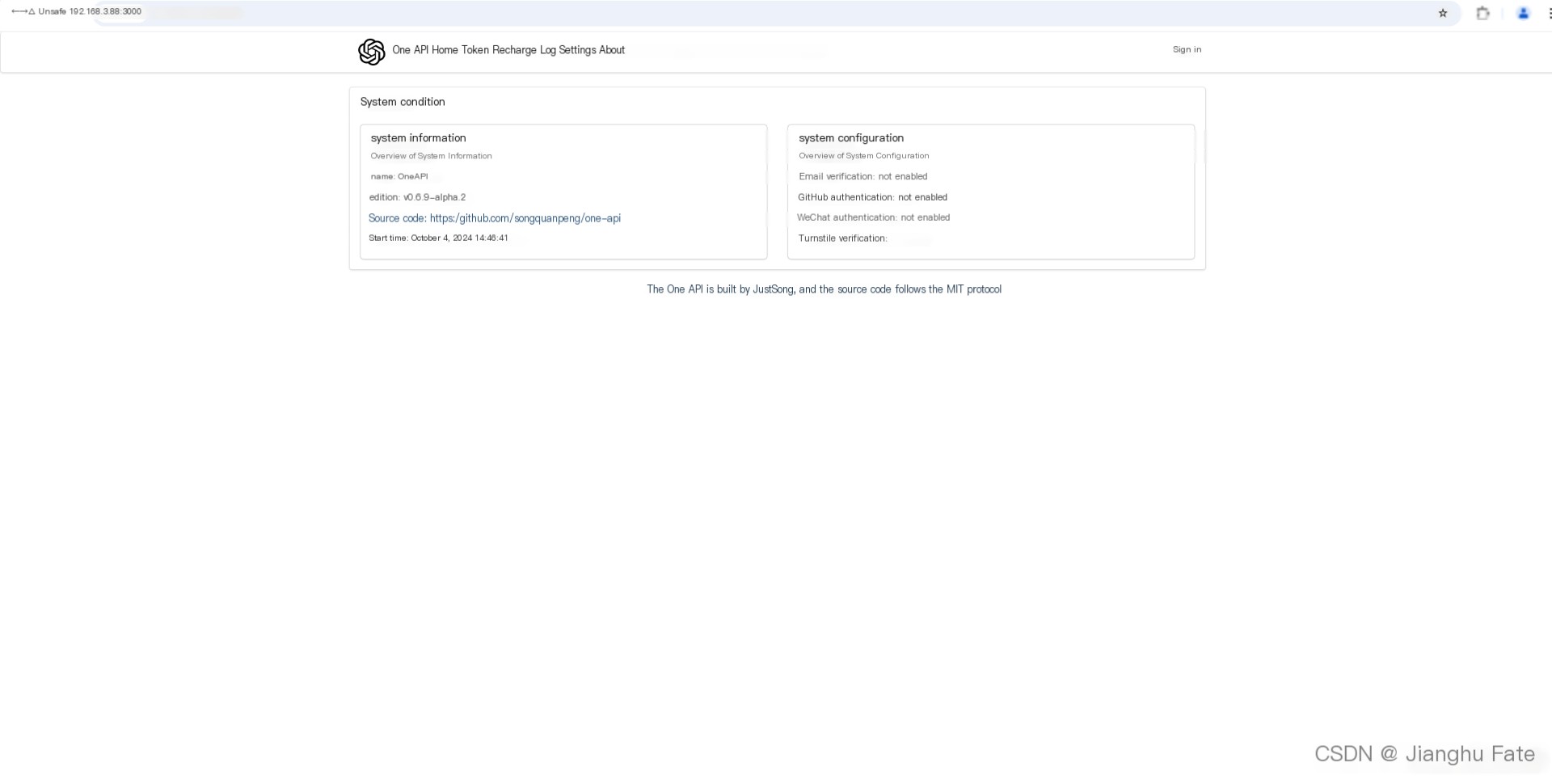

6. Access One-API Service

6.1 Visit Initial Page

Access address: http://192.168.3.88:3000, replace the IP with your server’s IP address. If you cannot reach the page below, check if the host machine’s firewall is disabled or the relevant ports are open. For cloud servers, also configure security group rules.

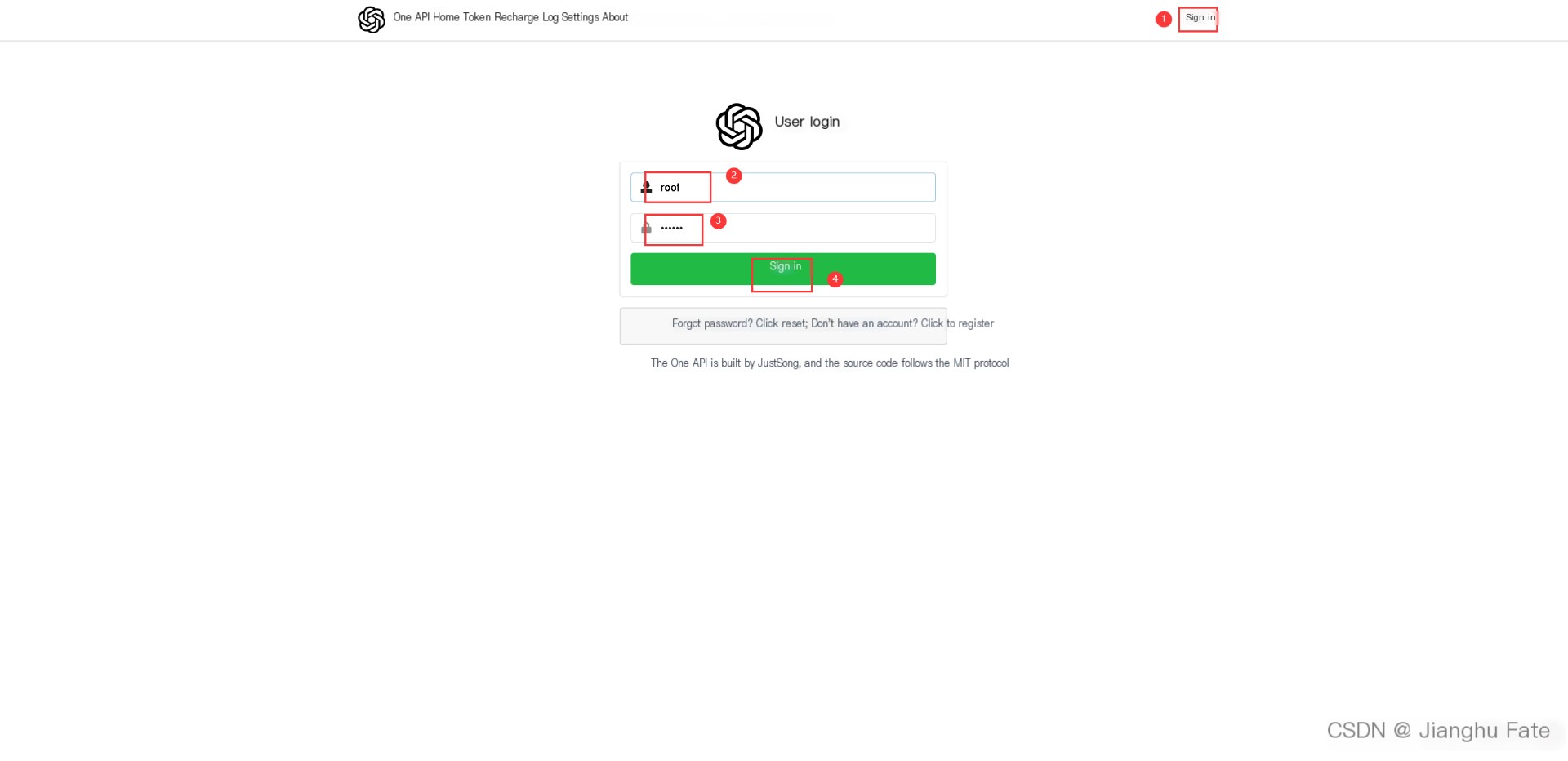

6.2 Log in to One-API

Initial Username: root,

Password: 123456, note

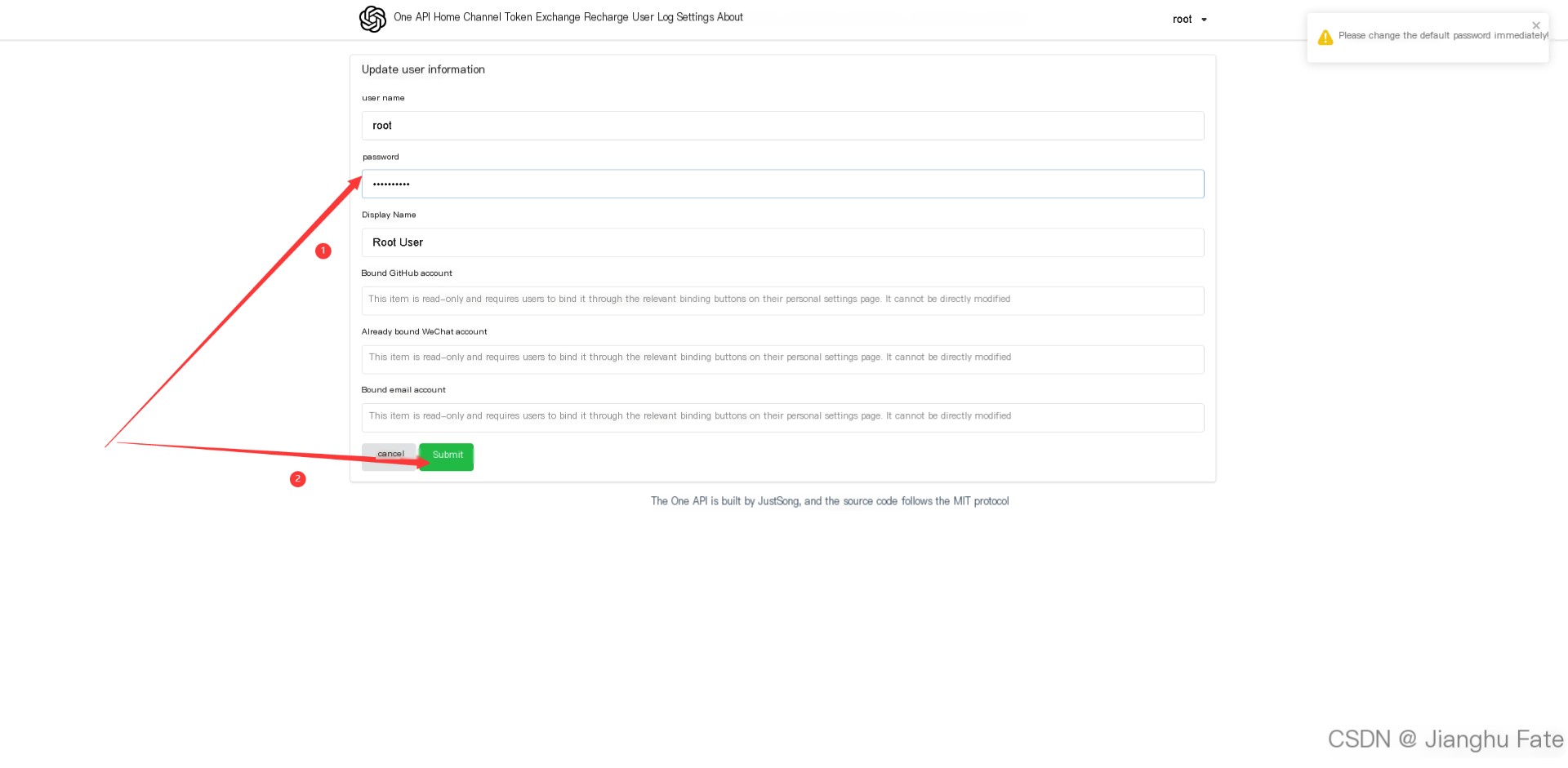

Be sure to change the password after logging in.

7. Configure Large Models

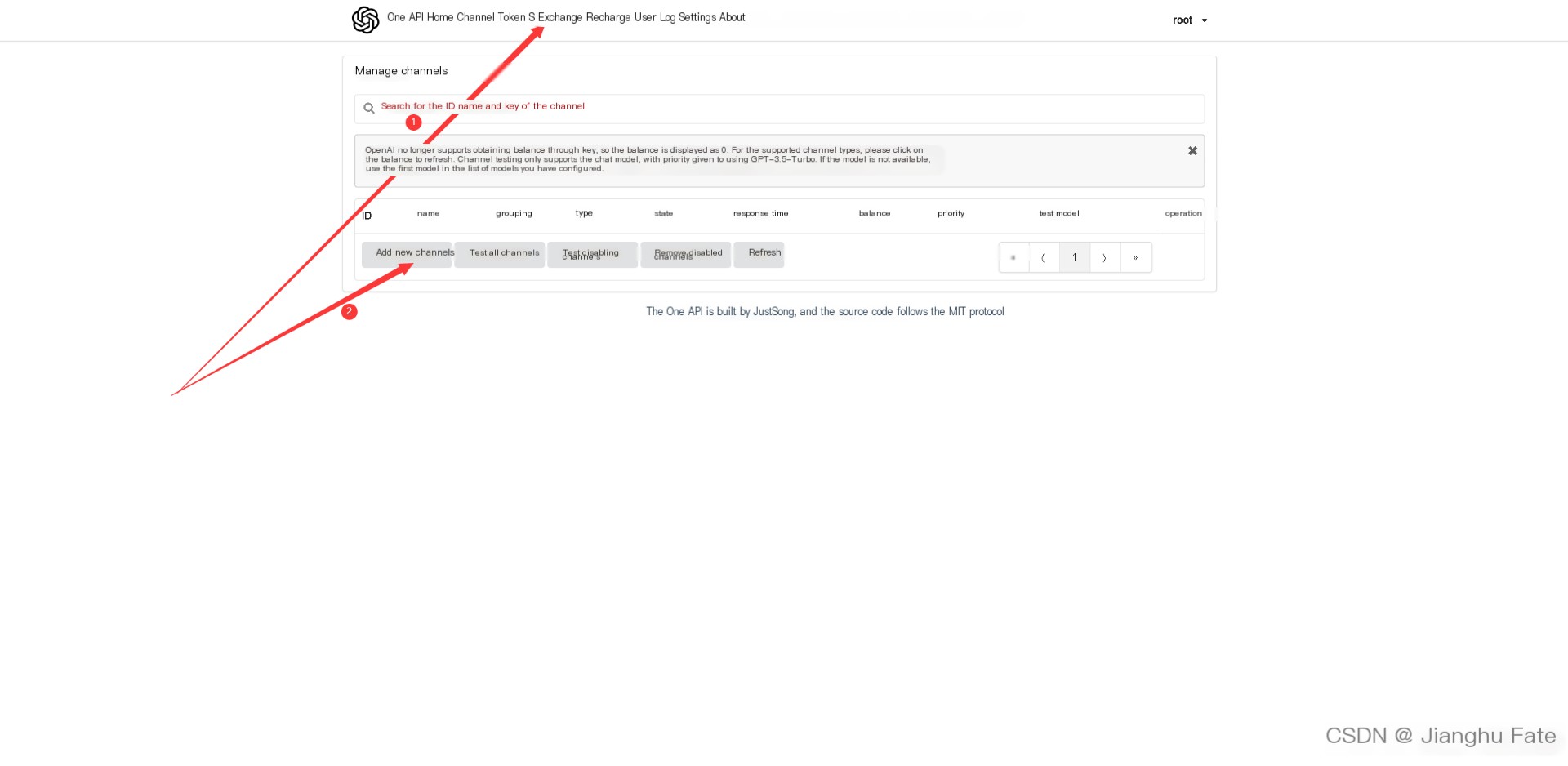

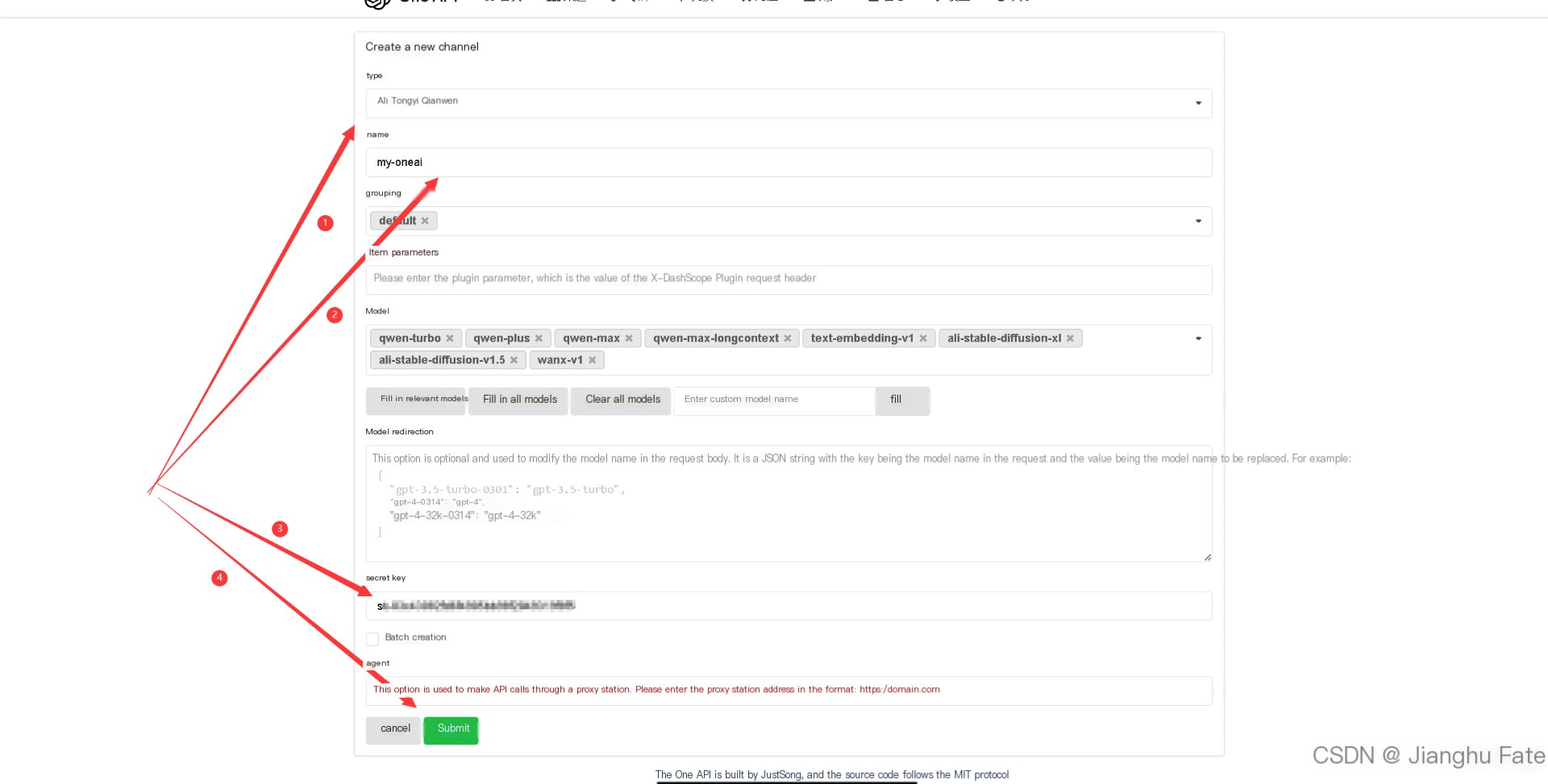

7.1 Add New Channel

Select the Phrase Model, add an API-KEY, confirm to add it.

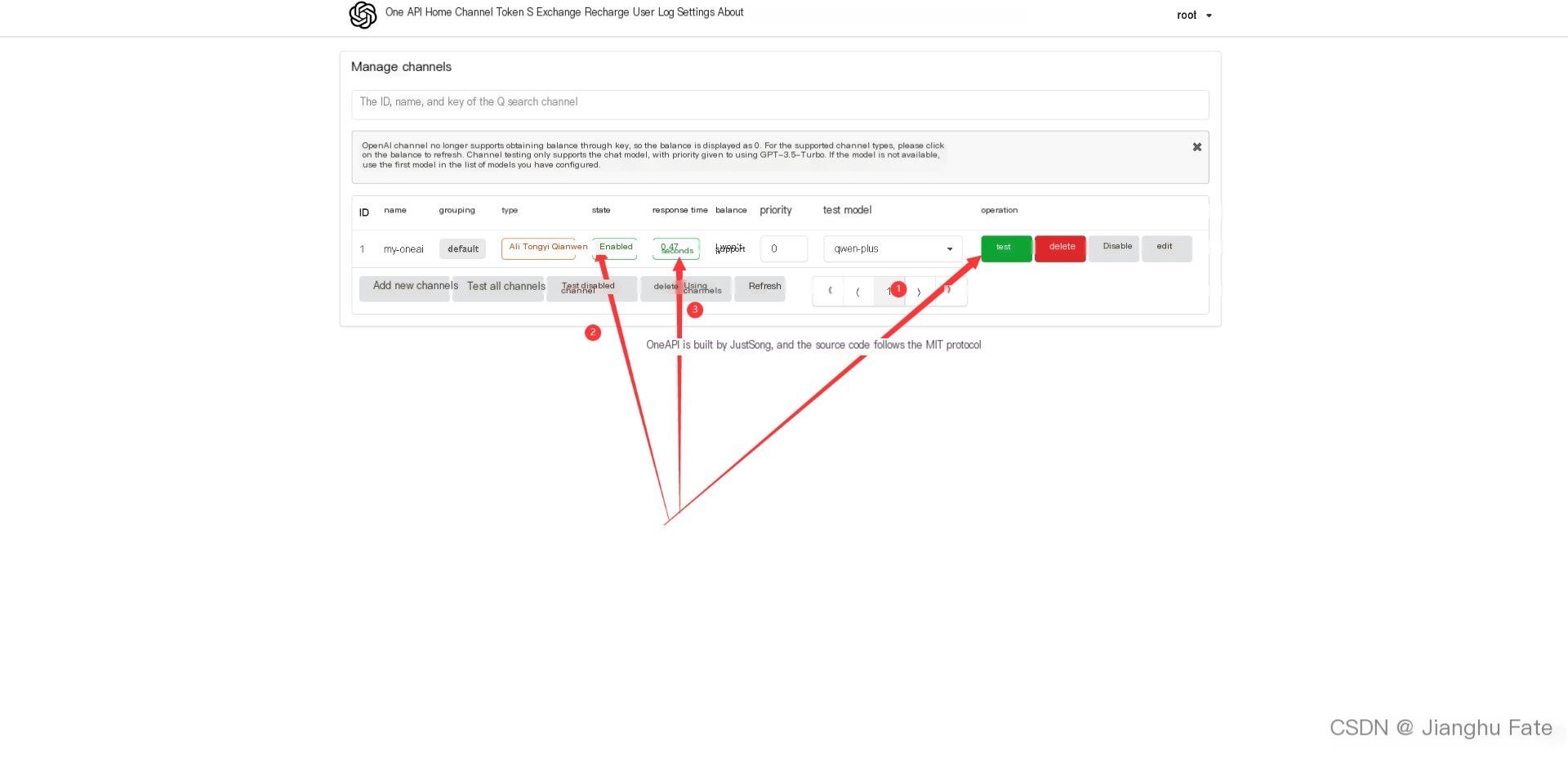

7.2 Check Channel Status

Check the status of the newly added channel, as shown below for a normal response status.

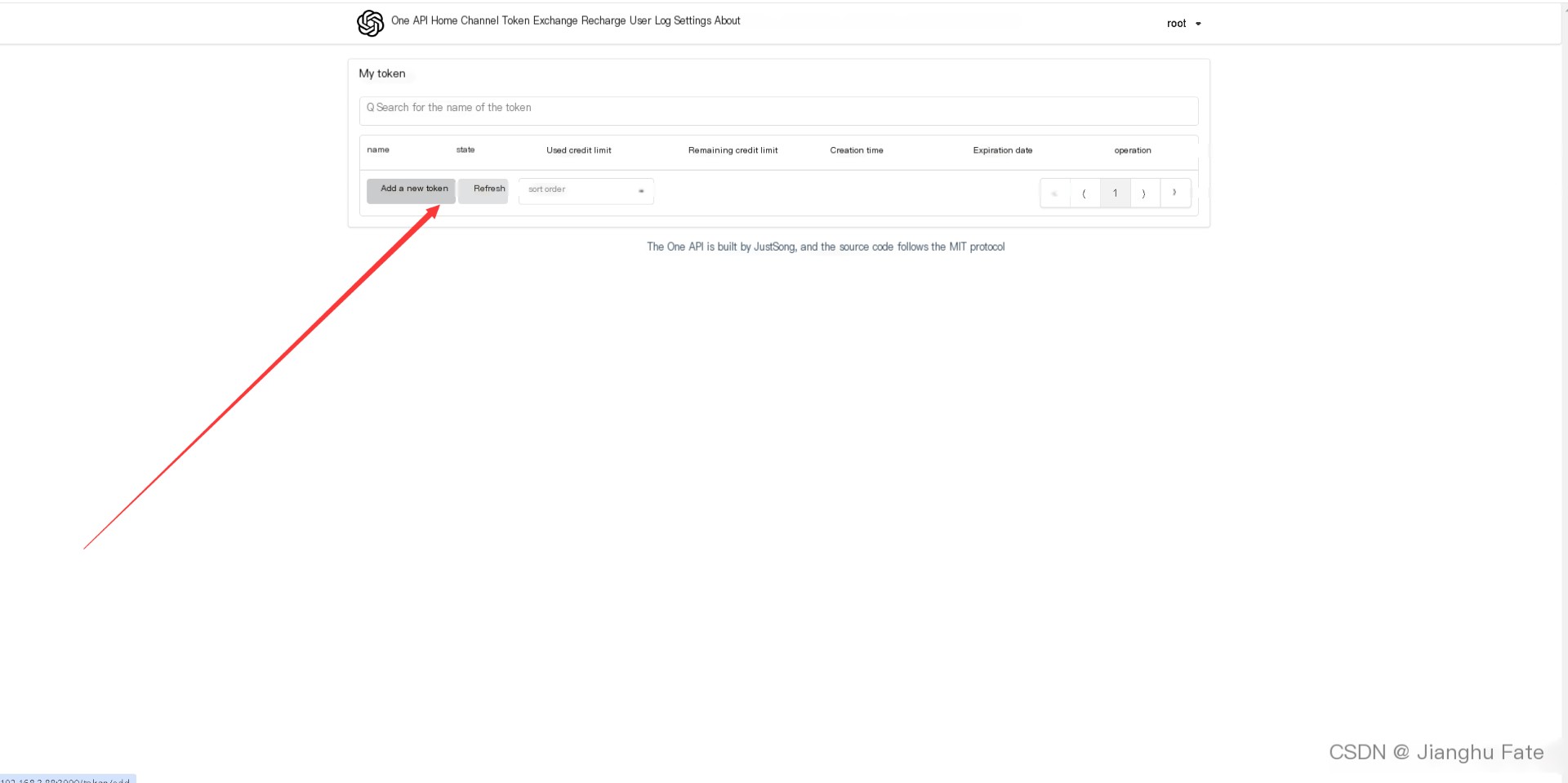

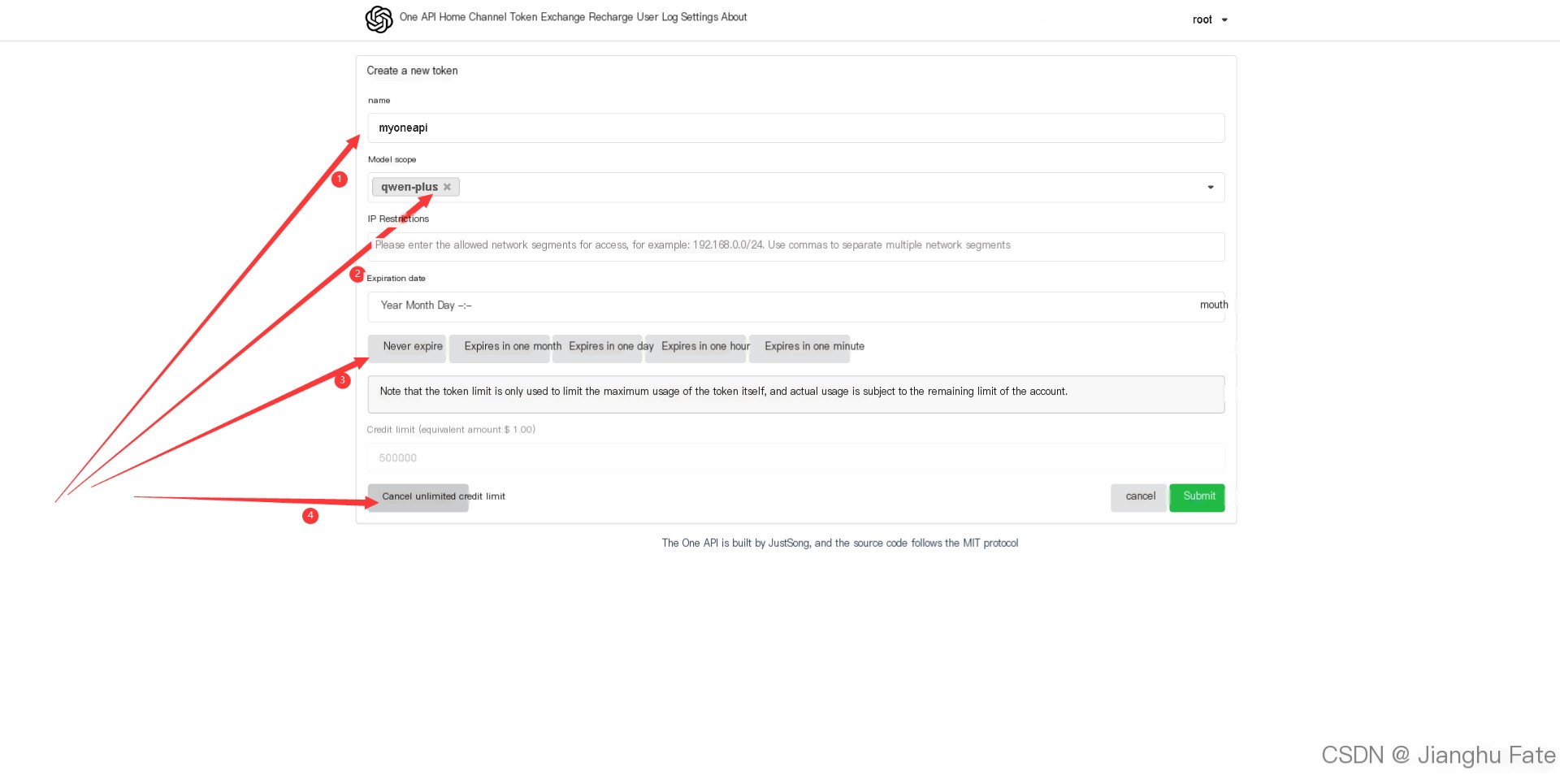

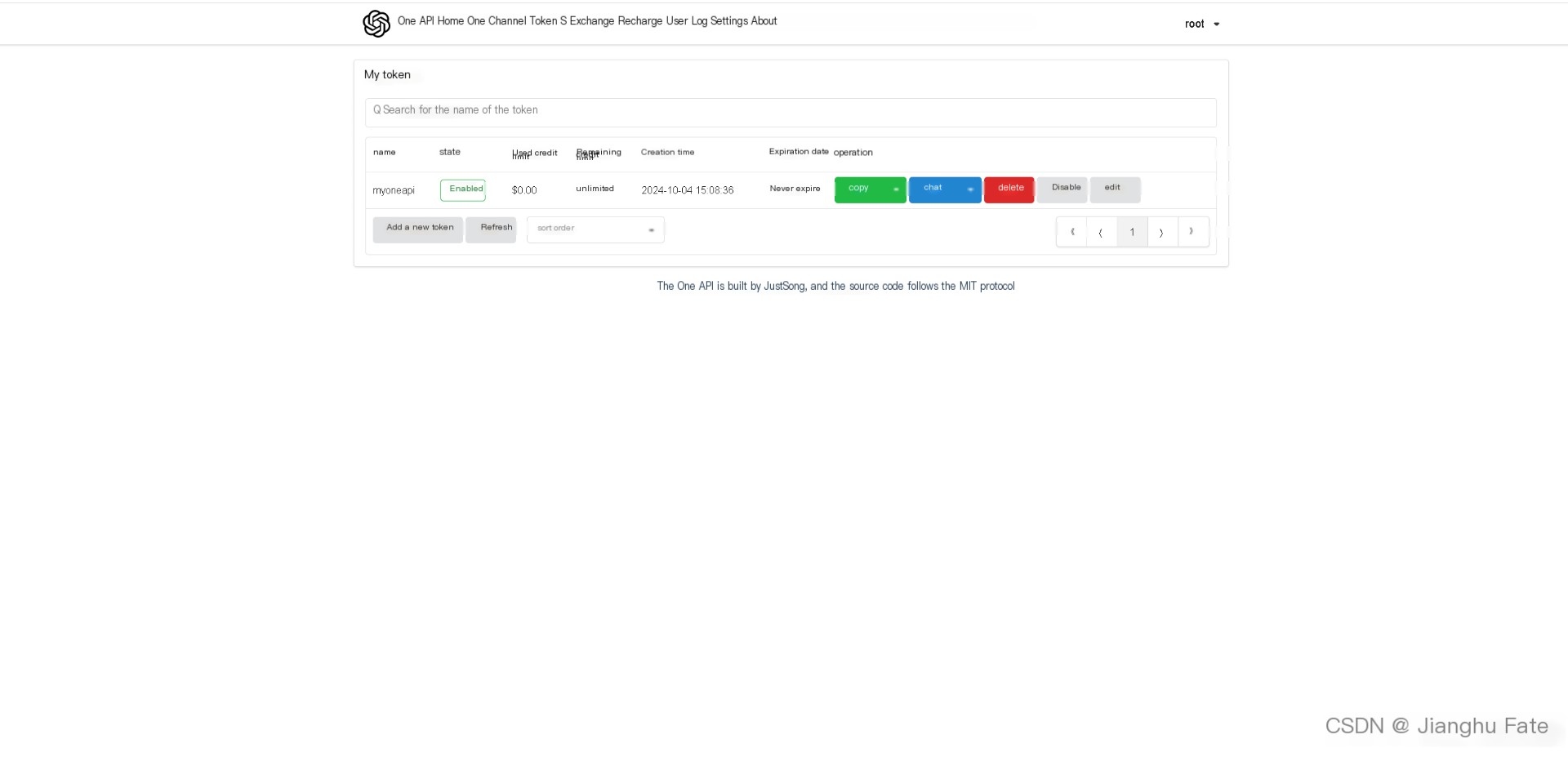

7.3 Add Token

8. Summary

One API greatly simplifies the access and use of various mainstream AI services (such as Azure, Anthropic Claude, Google PaLM 2 & Gemini) through a unified interface management and distribution system, offering centralized API key management and redistribution capabilities. Its single executable file and pre-built Docker images make deployment straightforward and quick, achieving true out-of-the-box usability. In practical applications, One API significantly enhances development efficiency for enterprises, developers, and researchers, reducing the complexity of managing multiple AI services. Whether integrating into existing systems or rapid prototyping, One API excels as a powerful tool for managing multiple AI services.